Voice Agents Don't Need to Be Faster — They Need to Feel Faster

My text-based agents are in production. I’m building a voice layer on top of them now, not shipped yet, but far enough in that the differences are visible. These are my working notes on perceived latency: what I’ve tested, what I’ve validated against other teams’ writeups, and what I’m still figuring out.

The pattern that kept coming up: teams optimize the model, get inference faster, reduce network hops. The agent still feels slow. Not obviously broken. Just slightly off, in a way they can’t instrument.

The model isn’t the problem.

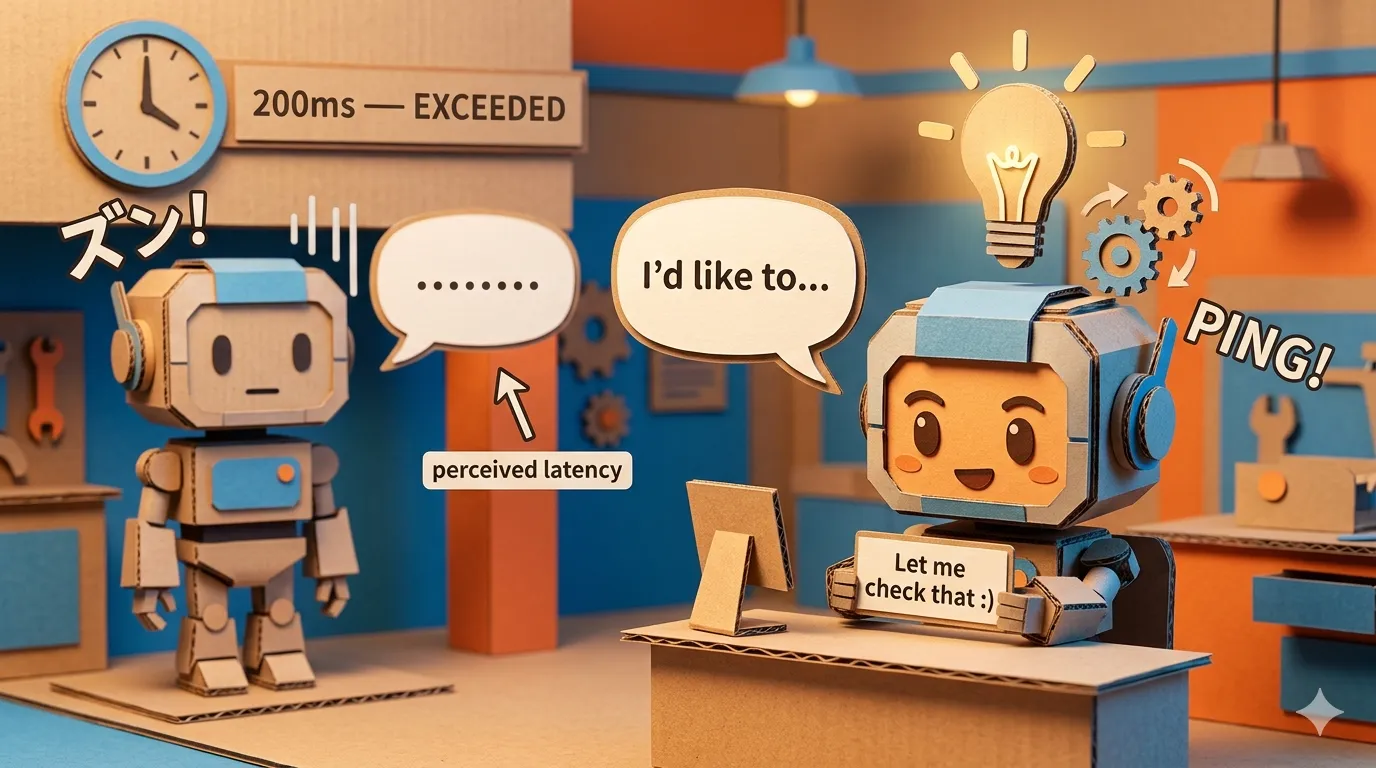

Two agents with identical end-to-end latency can feel completely different. One feels like it’s listening and processing. The other feels like dead air followed by a response. Same numbers on the dashboard. Completely different conversation.

The gap between when the user stops talking and when the agent starts speaking is a UX problem as much as an infrastructure problem. From what I’ve read, most of it is fixable at the orchestration layer, without touching the model at all.

The number that fools you

End-to-end latency measures time. Perceived latency measures trust.

Human conversation has a rhythm. Turn gaps that are too short feel like interruptions. Gaps that are too long feel like inattention. In normal conversation, those gaps average around 200 milliseconds. Most voice agents run well above that. Not because the models are slow. Because the orchestration doesn’t start reasoning until after the turn is confirmed complete.

That is the part worth fixing.

Start before you’re certain

If you’ve built proper turn-detection (see: Voice Agents Don’t Know When You’re Done Talking), you get an EagerEndOfTurn event at medium confidence. The user is probably done. The instinct is to wait for EndOfTurn to confirm before doing anything. That instinct adds latency.

The approach I’d try first: start reasoning on EagerEndOfTurn and treat TurnResumed as a cancellation signal. The event names below are illustrative. Your turn-detection layer will emit equivalent signals:

on_event("EagerEndOfTurn", transcript):

draft = begin_reasoning(transcript, context)

on_event("TurnResumed"):

draft.cancel() # User kept talking — discard

on_event("EndOfTurn", transcript):

response = draft.finalize(transcript)

speak(response)The transcript almost never changes significantly between EagerEndOfTurn and EndOfTurn. So instead of starting reasoning when the turn ends, you’re finishing it. The perceived gap shrinks without the model responding any faster.

The catch: everything you start here must be cancellable. If the user resumes and you can’t cleanly cancel the speculative work, you end up with stale responses. Cancellability isn’t optional. It’s the precondition that makes speculation safe.

The acknowledgment cue

Sometimes reasoning takes longer: a tool call, a database lookup, a multi-step decision. Speculative reasoning helps, but there’s still a noticeable gap.

Silence in that gap degrades trust fast. The user assumes something broke or that the agent didn’t hear them.

The fix is a short acknowledgment: “Let me check that.” Not an apology, not an explanation. Just a cue that processing is happening. Brief, then normal speech resumes.

A non-verbal version of this works too: play a subtle keyboard typing sound during the gap. Users read typing sounds as the other party taking notes or looking something up. It signals active processing without using the voice channel at all. The psychology is the same — silence breaks trust, any sound that signals effort holds it.

on_event("EndOfTurn", transcript):

if reasoning_will_exceed_threshold(transcript, threshold_ms=300):

speak_acknowledgment("Let me check that.")

result = run_reasoning(transcript)

speak(result)This sounds minor. Teams I’ve talked to say it isn’t. The same system feels significantly more responsive just from adding this, with no change to actual latency. Users judge responsiveness by feedback continuity, not by clock time. The acknowledgment tells them the system heard them. That’s enough to hold trust through the wait.

One rule: never deliver an acknowledgment during a user’s turn. Never interrupt to insert a filler. It only fires when the gap would otherwise be silence.

The third layer

There’s a level beyond this that’s more work but compounds everything: adapting pacing to the conversation.

Static pacing fails. Same speed, same rhythm regardless of context. When someone is rushed, slower responses add friction. When someone is uncertain, a fast response feels dismissive.

The signals are already there in the speech stream. From what I’ve read, the hypothesis is that rapidly changing partial transcripts correlate with uncertainty, and that high speech rate with short turns signals someone who wants efficiency. I can’t verify this from production data. But the timing signals from transcription alone seem enough to inform how the agent paces and shapes responses, without building sentiment analysis on top.

What doesn’t work

Speculation on EagerEndOfTurn breaks if your reasoning isn’t cancellable. If a tool call is already in flight when TurnResumed fires, you can’t cleanly discard it. You get a stale response delivered after the user has already moved on. Cancellability has to be designed in from the start. Adding it later is painful.

Acknowledgment cues stop working if they fire too often. If every response starts with “Let me check that,” users stop hearing it as a signal and start hearing it as a filler. It only earns trust when it’s rare. The user has to feel the agent genuinely needed a moment.

Adaptive pacing from speech signals is unreliable in noisy environments. Background noise, phone audio compression, and overlapping speakers corrupt the timing signals. I’m treating it as a soft hint, not a rule.

Where to invest

Three techniques, ordered by impact:

- Speculative reasoning on

EagerEndOfTurn: biggest single gain, closes most of the perceived gap - Acknowledgment cues during tool calls: high trust impact, low complexity, add it early

- Adaptive pacing from speech signals: meaningful quality improvement, requires more instrumentation

None of these require a faster model. They’re all orchestration-level decisions about what to do during the gaps.

The agent that feels attentive isn’t necessarily the fastest one. It’s the one that communicates clearly while it thinks.